Is It a Permitting Problem, or Is It Your Application Form?

How I came to think about permitting, the framework behind the 2026 Landscape Report

This is the story of how I started thinking about permitting, and the questions I use when someone says theirs is broken. It's the framework piece I promised in March's 2026 Landscape Report announcement.

Most conversations about broken permitting follow a pattern. Someone tells me their permitting is 'a disaster.' I nod, order another round, and ask for a specific example. We move on to schedules, unanswered emails, third rounds, confusion over who owns what, and, eventually, whether a dashboard could solve the problem. Confusion is easy to criticize. So conversations often end up there, regardless of the real problem.

That was the loop. Before the framework, conversations about "Permitting" (big P) were stories of frustration, venting, some very amusing anecdotes, and statements like, "Sir, this is a Wendy's." I could commiserate, share the frustration, and assure them they weren't the only folks dealing with a system that made no sense. After a while, I started sketching out what the workflows looked like, the language people used, and how work did (or didn't) connect.

I needed a way to ask "which part do you think is broken?" without making people feel like I was dismissing their answer. Not which agency, not which permit type. Which part of the work?

What Changed

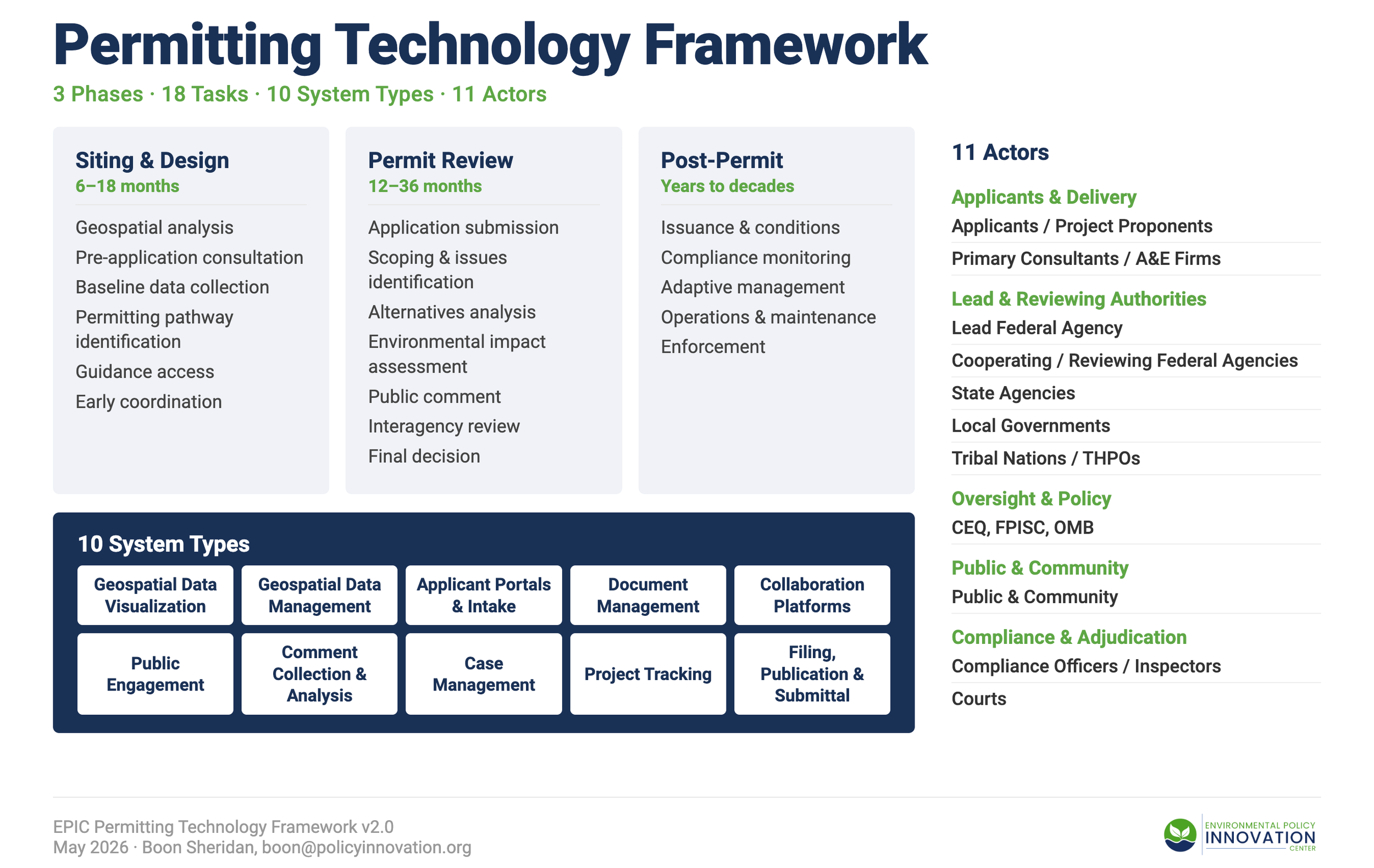

What I eventually built, with a lot of help over a lot of coffee and a fresh notebook, was a way to break permitting into smaller pieces to point at: three phases of work, eighteen tasks distributed across those phases, ten kinds of software tools that support the tasks, and eleven categories of people doing the work.

These sketched-out ideas could prompt follow-up questions that take the conversation deeper into the details. "Our permitting is broken," turned into, "we get too many garbage applications." "We get too many garbage applications," turned into, "it's not their fault really, the form hasn't been updated in a decade." "It's not their fault, really," turned into, "wait a minute, why haven't we updated that form in a decade?"

Once I had a diagnostic that could turn abstract frustration into better questions, it became a way to catalog all the permitting software out there. There are hundreds of companies that claim to solve 'permitting,' but they often focus on just one part of the problem. A GIS viewer and a case management system are both referred to as "permitting software," but you really can't call them apples-to-apples. Pretending they are is how vendor comparison spreadsheets become confusing way too fast, ask me how. (Please don't ask me how.)

Three Phases

Most permitting conversations collapse into "the review takes too long." That framing treats permitting as a single event, when most of the friction lies in the surrounding parts.

Phase 1: Siting and design is everything before anyone touches an application form. Where could this project go? What's already on the ground? Has anyone checked whether there's a cultural site, an endangered species, or a wetland that will stop the whole thing six months from now? This is the phase people skip or rush through, and it's the phase that punishes you later. Every practitioner I've talked to has a story about a project that blew up during review because somebody didn't do the homework. The frustrating part is that the homework isn't hard. It's just that folks don’t tend to get excited for "do the homework before there's an application to do homework on."

Note: There are consultants and applicants who are very good at this type of work, especially with NEPA and federal permits. However, not every project has the expertise, budget, and timeline to get the work done.

Phase 2: Permit review is the part everyone pictures when they hear the word "permitting." Application in, analysis done, decision out. NEPA documents, Section 106 consultation, 401 certifications, 404 permits. This is the phase that gets blamed for delays, and sometimes it deserves it. But a lot of what looks like a slow review is actually Phase 1 work that didn't happen on time. The reviewer sends back a request for baseline data that should have been collected a year ago, and now everyone's waiting while the applicant scrambles. Most permitting tools live here because that's where the money is.

Phase 3: Post-permit is the long tail that almost nobody talks about. The permit gets issued, and everyone exhales. Then someone has to make sure the project actually does what the permit said it would. Monitoring compliance, tracking mitigation commitments, handling modifications, and occasionally, enforcement. This phase lasts for years. Sometimes decades. And it has the fewest tools, the least attention, and the most consequences when it fails. If a 2019 mitigation commitment isn't being honored in 2026, who notices? Right now, in most places, the honest answer is nobody.

Go to the full briefing and see the full list here.

The Eighteen Tasks

The eighteen tasks came from listening to how people actually described their work when they forgot they were supposed to be talking about "permitting." They said things like, "I spend three weeks chasing down whether anyone's looked at the wetlands on the east parcel." Or, "My job is basically making sure the application isn't missing page six again." Or, on one memorable occasion, "I just forward things to people who forward things to other people."

The tasks are the verbs of permitting, the actual work that gets done (or doesn't) between "someone wants to build something" and "someone checks whether they built what they said they would." Scoping a project's boundaries. Collecting baseline environmental data. Routing an application to the right reviewer. Running a public comment period that nobody reads (but should, and maybe could if everything were more streamlined), and tracking whether a 2019 mitigation commitment is still being honored in 2026.

I didn't start with eighteen. I started with about forty, most of which turned out to be the same task described differently by people in different agencies. "Stakeholder coordination" "interagency consultation," and "getting everyone in the same room" are, functionally, the same work wearing different lanyards. Collapsing those down is where the diagnostic value lives. When two agencies use different words for the same task, they don't realize they're working the same problem. The vocabulary is smaller than people think, and the problems are more shared than anyone admits. The briefing names all eighteen.

Go to the full briefing and see the full list here.

Ten System Types

The ten types are defined in the framework briefing released alongside this post. What matters here is the habit of checking which one you mean before you say "permitting software." I've sat in meetings where someone said "our permitting software doesn't work," and it took twenty minutes to figure out that the GIS team was talking about their geospatial data management, the front desk was talking about the applicant portal, and the director was talking about the case management system. Three different problems, three different budget lines, one word. Every tool in the inventory gets exactly one type. No double-counting. The discipline of picking one is what makes the inventory honest, and it gives you the question that tends to land hardest in agency conversations: when you say your permitting is broken, which of these ten things do you actually mean?

Go to the full briefing and see the full list here.

Eleven Actors

The eleven actors are organized into five groups: applicants and their consultants, the agencies that review (federal, state, local, tribal), the oversight layer at CEQ, FPISC, and OMB, the public, and finally the inspectors and courts who show up after the permit is issued or when something goes wrong. Most of the time when I list them, someone says, "Wait, you're counting Tribal Nations separately?"

Yes. And here's why. Only 27 of 305 tools I found (8.9 percent) explicitly support tribal coordination, and three entire system types have zero tribal-specific support. Tribes can flag impacts during planning, but have almost no way to verify that mitigation commitments are honored over the decades a project operates. That gap is invisible without a framework that treats Tribal Nations as their own category rather than folding them into "the public." Most failures trace back to two actors who needed each other's information and had no way to find it. The tribal gap is the starkest version of that pattern, but it's not the only one.

Go to the full briefing and see the full list here.

The Diagnostic Move

This is where the diagnosis happens.

When someone tells you their permitting is broken, the framework keeps you out of the application-form trap. You can ask three questions in order.

Which phase? Phase 1 means you can't tell where to put a project. Phase 2 means the review itself is slow. Phase 3 means you can't track what was promised after the permit was issued. Most people can answer in one sentence, and most of the time, the answer isn't the one the first complaint implied.

Which system type, if any? Is what you're calling a permitting problem actually a problem with one specific kind of system? Your geospatial data management is in seven incompatible formats. Your applicant portal can't pass attachments to your case management system. Your collaboration platform is email. Naming the system type changes the conversation from "fix permitting" to "fix the thing that's actually broken."

Or is it not a tool problem at all? Sometimes, two actors can solve a problem in five minutes if they can see each other's screens, and no software in the world is going to substitute for the meeting nobody scheduled. The framework surfaces when the problem is organizational rather than technological.

The answer to the first question usually reframes everything. "Permitting is broken" becomes "Phase 1 baseline data collection takes nine months because three agencies maintain different versions of the same wetland layer." That's a problem with a budget line attached. The original complaint wasn't.

If you take one thing from this post, take the question. It's worth more than the framework it came from.

A Note on Where You Sit

This diagnostic works for federal and state teams, but it plays out differently for each. If you're at a federal agency, some of what surfaces will run into walls you can't move on your own. Your case management is procured at the department level; your collaboration platform is whatever the OCIO authorized; your data standards are whatever the latest CEQ guidance says. The diagnostic still helps. At least you know which fight to pick and which to stop having.

If you're at a state agency, you probably control more of your own stack than you think. The same diagnosis that surfaces a fight at the federal level surfaces a to-do list at the state level. That asymmetry is one of the reasons the next piece in this thread is a Quick Wins Guide: small, scoped, controllable things that come into focus once you know which part is actually broken.

The Spine of the Inventory

The 300-plus tools in the Landscape Report exist because every tool was placed within this framework. Each one got a phase, a task list, a system type, and an actor map. Without those four dimensions, "305 tools" would be trivia. With them, you can ask how many tools do meaningful Phase 3 work (twenty-six), or how many have integration-ready APIs (more than 75 percent).

That last number keeps coming up in research. If more than 75 percent of these tools can already technically talk to each other, the interesting question is why they don't. The framework gives you a way to investigate that question rather than just ask it rhetorically. The Landscape Report laid out the evidence. The framework is what made the evidence sortable.

People sometimes receive this as a maturity model or a scorecard for ranking agencies. The difference matters. A maturity model tells you how well you're doing. This tells you where to look. It's a vocabulary and a coordinate system, and its job is to make conversations specific enough to be useful. Some categories will change as the validation work advances. Practitioner interviews, the PermitAI research with PNNL, and state engagements in Maryland and Pennsylvania, to name a few, are all stress-testing whether these are the right places to cut. Finding out which ones are wrong is the work.

What's Next

This post is paired with a framework briefing that walks through each of the four dimensions in detail: what each of the eighteen tasks involves, which tools fit each system type, who the eleven actors are, and where work actually fails between them. Think of this post as the question, and the briefing as the system for plotting your own answer.

After that, the next post is the Quick Wins Guide: concrete moves you can make once the diagnostic points to a smaller problem. These are things state agencies have actually done, without a federal mandate or an act of Congress, because somebody decided which part of the stack they were trying to fix and stopped trying to fix permitting in general.

If you've been carrying around a sense that something is broken but you can't name it, try the question. Ask which phase, which system type, and whose handoff. My experience has been that the answer is almost never where the original complaint pointed. It’s almost never the fault of the person asking the question; it’s a system so complex that it’s hard to see inside where it works to effectively diagnose the problem.

The 2026 Landscape Report and the announcement post introducing it are both available at policyinnovation.org. The EPIC Permitting Technology Framework Briefing, the structured reference behind this post, is released alongside it.