Expanded Interoperability and Systems: Creating the Modern Digital Highway System Standards for Wildfire

Welcome to our final Priority Win for establishing a national wildfire intelligence capability. If you want to review (or haven’t seen) our three prior wins, check them out here: Governance Foundations, Operations - Research - Operations Pipeline, and Reciprocal Data Access.

Imagine an alternate universe where the U.S. didn’t standardize rules of the road for interstate highways. You cross a state line and need to swap from the right-side to the left-side, change your speedometer from mph to kmh, green means stop, red means go. In other words: a driver’s dystopia.

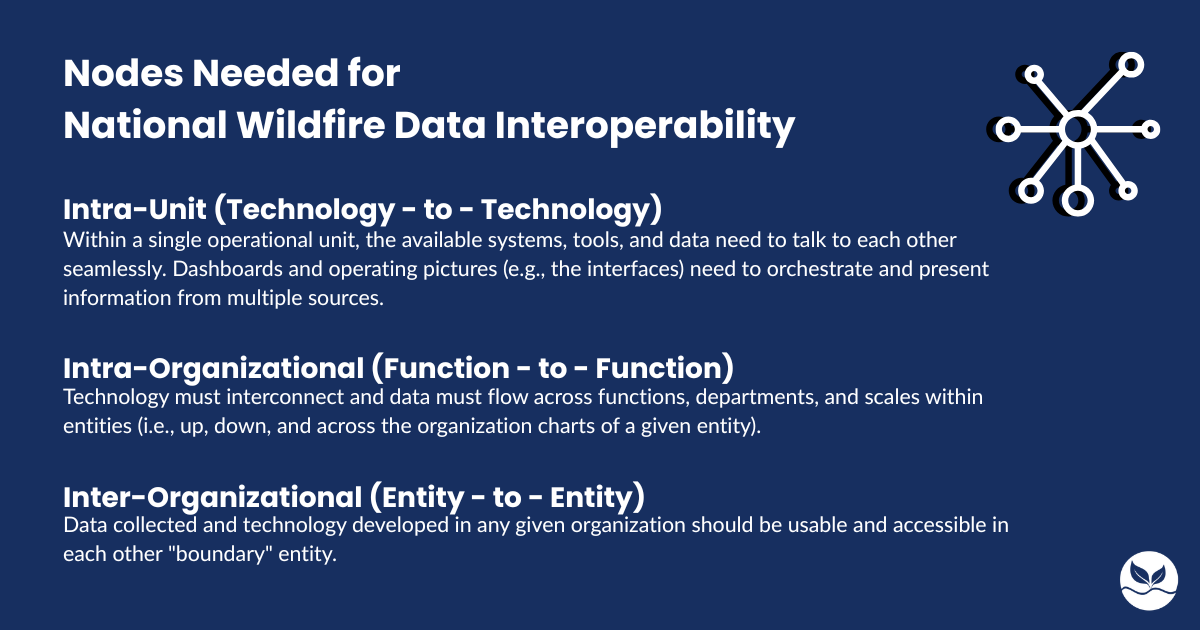

The current wildfire data and technology information ecosystem is a shade of this chaos. The work is shared across many jurisdictions: federal, state, county, municipal, Tribal, volunteer, private, academic, NGO. Within those jurisdictions, responsibility is also frequently fragmented across organizations. Within those organizations, it is further broken down into operational units.

At each of these inflection points the rules of the road can – and frequently do – change.

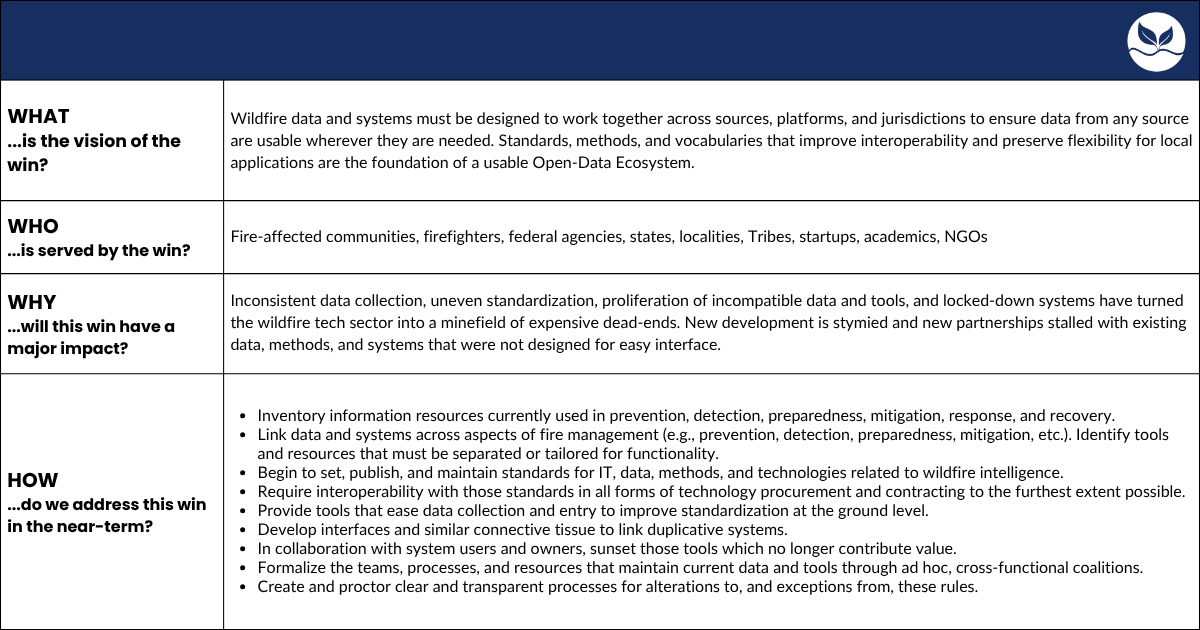

A successful national wildfire intelligence capability will connect these inflection points and unify these rules. We need standards that prioritize universal interoperability across data and systems while preserving flexibility for local specifics and applications. This is a tall task, one that several of the technologists and practitioners we spoke with frame as an impossibility.

But, we’ve navigated by north stars for thousands of years without reaching them. In their pursuit, we elevate our status quo.

We are facing an opportunity to transform our wildfire data ecosystem from independent telegraph towers into the interconnected telephone lines of a functional open data ecosystem. Each component must be fully compatible with its boundary components to be effective and sustainable.

This interconnectivity works on three primary axes: interface, metadata, and methodology.

Interface is the telephone: the means by which distinct entities pass information back and forth.

Metadata are the words: the individual units of meaning that convey information.

Methodology is akin to language: an overarching system of shared understanding that enables comparison, collaboration, and continuity.

Communicating and incentivizing standards for data collection and processing is the cheapest and most effective way to improve their interoperability across the system. Similarly, presenting interfacing standards as transparent requirements for internal development and external procurement will anchor innovators to designing tools that serve the collaborative, public mission of a national wildfire intelligence capability.

Blueprints for an Open Data Ecosystem

To deliver on these initiatives, national wildfire intelligence staff must start an earnest and thorough research process to map the universe of interoperability successes and failures. Those successes should then be formalized into initial standards, supplemented by solutions for the discovered failures. Adhering to these standards is hard work for the partner agencies with potentially enormous payoff. That payoff needs to be made explicit in every communication to give sectoral actors a reason to put in the work. Our national wildfire intelligence capability needs to lift up the information sharing, tool development, and cost savings that interoperability enables.

Aligning to Create the “Digital DNA”

Sustaining an open data ecosystem hinges on compatibility. Aligning metadata and standards to create “digital DNA” enables new data, systems, and entire organizations to enter the field. Greater access to information, seamless interfacing, and fresh eyes create opportunities for new solutions where none previously existed. By establishing centrally governed standards for data definitions, file types, and core procedures, the system can eliminate the silos and manual errors that currently stall scientific discovery and technology adoption. These high level standards should be communicated and enforced with transparent contract language. To maximize engagement and resilience over the long haul, the standards and contracts should leave sufficient flexibility for local operators to continue using their discretion and expertise in context.

Consistent Process, with Local Flexibility

True interoperability relies on a "consistent process, with local flexibility" model that empowers stakeholders to tailor specific data inputs and tools to unique environments. Like many topics connected to the national wildfire intelligence, centralizing interoperability carries the potential for negative externalities if pursuing it erases local knowledge and agency. Through collaborative investment in these shared standards and repositories, wildfire agencies can move toward a unified infrastructure that ensures transparent, explainable, and accountable outcomes across all levels of the public and private sector.