National Approach to Wildfire Data and Technology: Equal and Reciprocal Access to Federal Data for Non-Federal Partners

What’s the best potluck outcome? A beautiful spread evenly balanced across dish types where everyone brings their best recipe to the table and gets to pick and choose a plate that’s perfect for their palette. But, have you ever been to a potluck where a bunch of people show up empty-handed? Or with a beverage “...just for me?” Even worse: one where the host doesn’t coordinate any details about who is bringing what and you end up with a trestle table filled with veggie casseroles?

A healthy potluck ecosystem and a healthy wildfire data ecosystem share a core truth: when everyone knows what to bring, knows what’s available, and does their part: everyone wins.

As we’ve discussed throughout this series, the success of the nation’s proposed wildfire strategy relies heavily on the effective deployment of a new national wildfire intelligence capability. This capability is to be the definitive, cross-boundary integration layer for all wildfire data and technology.

The current system, meanwhile, remains deeply fragmented. Non-federal users are often forced to rely on personal connections rather than standardized processes to access federally managed data. Conversely, federal data managers for existing hubs struggle to consistently pull in non-federal data, creating a two-way “access and input” disconnect. These gaps stifle research, hamper decision-making, and sideline life-saving technologies. Furthermore, this “who-you-know” data access landscape frequently excludes those data developers and end-users who lack the legacy connections required to fully participate.

Drawing from lessons learned from experts, we recommend that policies and technologies for data sharing be explicitly designed for barrier-free reciprocity. Data production should not be centralized. Instead, distributed producers should interact seamlessly with an integrated national picture. By breaking down silos and pooling resources through open access data hubs, the national capability will serve as the integral “potluck coordinator” to place more complete and effective data directly in the hands of those who need it the most.

Agreements for Streamlined and Effective Partnership

A modern national wildfire innovation strategy uses data and technology sharing agreements to make cooperation natural and mutually beneficial. Where these agreements are already working properly, all sides benefit from increased access to needed data and decreased costs for duplicative systems. One of the first steps to creating a national wildfire intelligence capability is to integrate and update existing data sharing agreements while developing and formalizing new connections to fill the gaps between them.

Every day without these agreements is a day that the true vision of national wildfire intelligence as a seamless integration hub goes unrealized. For the first year of its existence, any national capability should focus on establishing necessary MOUs (or other formalized agreements) with its core partners.

Ultimately, these agreements must define clear roles and responsibilities for dataset curation across all sectors. Importantly, they must also be designed to enable full participation from Tribal, state/local and other non-federal stakeholders.This reciprocal exchange can transform raw, disparate data into a shaped, strategic asset that improves decision-making across boundaries and maximizes taxpayer dollars.

Processes, Standards, and Roles & Responsibilities

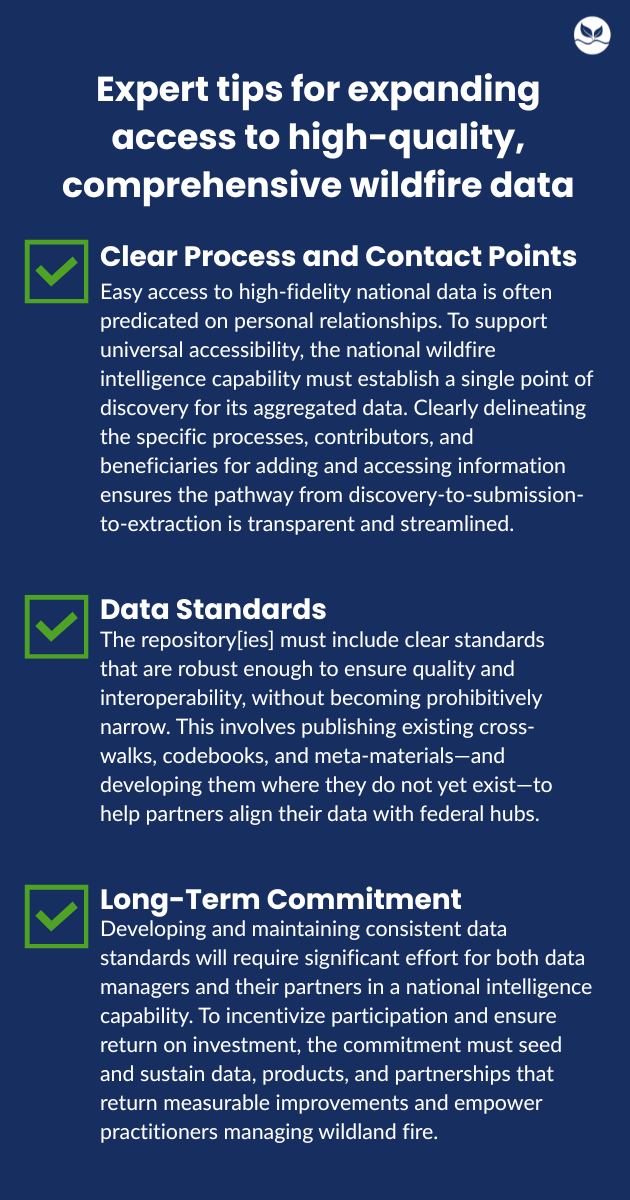

Beyond formal agreements, success will depend on movement away from the "who-you-know" (or “what-you-can-pay”) landscape that currently defines wildfire data. This shift requires outreach campaigns to onboard non-federal partners and continuous collaboration to maintain data integrity over time.

In our research, we heard from technologists and practitioners who worked across agencies and jurisdictions on data repositories like LANDFIRE, the USDA Forest Service’s Risk Management Assistance (RMA) Dashboard, and more localized efforts such as the Rogue Basin All-Lands Forest Restoration Explorer. These data managers described initially cumbersome and poorly capacitated data aggregation. Over time, however, some hard-earned lessons have emerged, leading to improvements in the overall quality and completeness of these systems:

The next and last post in our national approaches to wildfire data and technology series will focus on priority wins for expanding interoperability across the ecosystem.