The Missing Link in Environmental Tech Adoption: People

The fields of "nature tech" or “environmental tech”—encompassing everything from advanced sensors and remote monitoring hardware to sophisticated data analytics and AI-powered software—are experiencing an unprecedented boom in innovation. Whether it’s focused on forestry, biodiversity, or wildfire, never before have environmental practitioners had access to such a diverse and powerful suite of tools designed to manage, monitor, and protect natural resources. Yet, for many public sector agencies and non-profits, this technological surge remains an unfulfilled promise. Despite the abundance of options, the promised benefits—a leap in productivity, dramatically improved decision support, and greater transparency in resource management—have been slow to materialize, suggesting a critical disconnect between technological readiness and organizational adoption. At the heart of this, there is a fundamental truth we often ignore: technology doesn’t adopt itself. People do. Yet, there is often relatively little focus on how to ensure that both technology and people are ready and performing at their best simultaneously.

In EPIC’s work on environmental technology adoption in the public sector, several related patterns emerge over and over:

There are tons of ideas for how to better use data and technology, and they can come from many vantage points: practitioners, non-profits, researchers, technologists, public servants, private partners. These ideas are developed into technology products by a diversity of “providers” that don’t always fit the traditional procurement pathways for adopting technology.

It’s easy to start developing and piloting, but hard to deploy and scale in the public sector. Public agencies with natural resource missions typically have poorly marked roadmaps for carrying ideas to operations.

Miscommunications are common between technology developers (be they in the public or private sectors) and the agencies and partner organizations that are deploying the technology. Developers and agencies frequently assume that both the technologies and the adopting agencies are readier than they actually are.

More than ever technology needs to be adopted across jurisdictional boundaries – federal, state, local, and private. Doing so requires significant investment in the relationships and standards that enable interoperability – all enabled by shared language.

Technology adoption mostly focuses on getting the technology into a new organization — a heavy lift in and of itself — rather than being used to its full potential within an agency. There’s a tendency to underinvest in developing staff’s comfort and proficiency with the technology. This oversight frequently results in adoption that falls short of its true potential benefits. We call this perfect combination of deployment and employment Authentic Adoption.

Technologists and environmental practitioners speak different languages. They need better ways to communicate about their progress and needs to ensure that technology adoption succeeds.

Addressing these challenges starts with a shared understanding of the destination – that is: full and authentic technology adoption – and the steps that it takes to get there. Frameworks for tracking how ready a technology is have long been used in research, but where we see a gap is a framework designed to track the "people side" of technology adoption with the same rigor we apply to the code and hardware.

How Other Agencies Approach this Problem

For decades, federal agencies with a strong R&D focus have leaned on Technology Readiness Levels (TRLs) to measure progress toward operations-ready technologies, including at DOD, NASA, and DOE. TRLs track the development of the technology itself from ideas and scientific principles (level 1) all the way to a piece of hardware or software that is operations ready (level 9). TRLs are great at describing and tracking the iterative stages of technology improvement. They are, however, remarkably silent on the human element: has the technology been built with input from the public servants who will ultimately use it in their day to day work? Has it actually been tested in environments that truly mirror reality?

Over the past few years, Human-Readiness Levels (HRLs) have emerged as a complement to TRLs and begun to fill this gap. They speak to the importance of building technology with an understanding of how practitioners will use it in the field: what harsh conditions will it face, how much time does a person have to enter information, and what is connectivity actually like away from the office or lab. HRLs are well suited to structured research and development enterprises, like those at the Department of Defense and at the Nuclear Regulatory Commission, where R&D is highly integrated with the operations it serves. Environmental agencies also benefit from using TRLs and HRLs to understand what is required to prepare the technology they want for deployment, but don’t fully reflect the reality of technology adoption we see.

How Public Sector Environmental Work is Different

Public sector environmental work depends on coordination to an extent that isn’t true at all federal agencies. To get work done on the ground, agencies like the Forest Service and EPA need a web of technologies that is spread across multiple agencies (e.g. endangered species data from Fish and Wildlife Service informing decisions in National Forests), disciplines (e.g. research data to design forest treatments) and sectors (non-profit and private sector partners often implement on behalf of the agencies). Like the work performed by and for environmental agencies, the decision-making structure about what technologies to use day to day is often decentralized. Decisions over what technology to use can reside at a Department, Agency, Regional Office, or Field Office level, or with Contractors and Grantees, which creates a patchwork of uncertainty. Many different types of organizations play the role of technology developer — research teams at universities and labs, technology giants, and small start ups all regularly create new software for environmental agencies — further increasing the difficulty of coordinating.

One main implication of all this is that there is a need for shared language around technology adoption that is flexibly applicable to highly variable organizational contexts. It has to be accessible enough to help a program manager in the Department of Interior ask questions and understand when and how a satellite launched by a non-profit can be integrated into their operations while also making it easier for a large community-based non-profit to ask questions and decide whether to use eDNA sampling to monitor biodiversity. Plain language versions of TRLs and HRLs, supplemented by more detailed assessments of how each technology can be integrated alongside existing technology, can partially help meet this need. However, they are less well-suited to the task of tracking how much work it will take to ensure the people in an organization are equipped to use technology to its full potential.

What the Research on Technology Adoption Tells Us

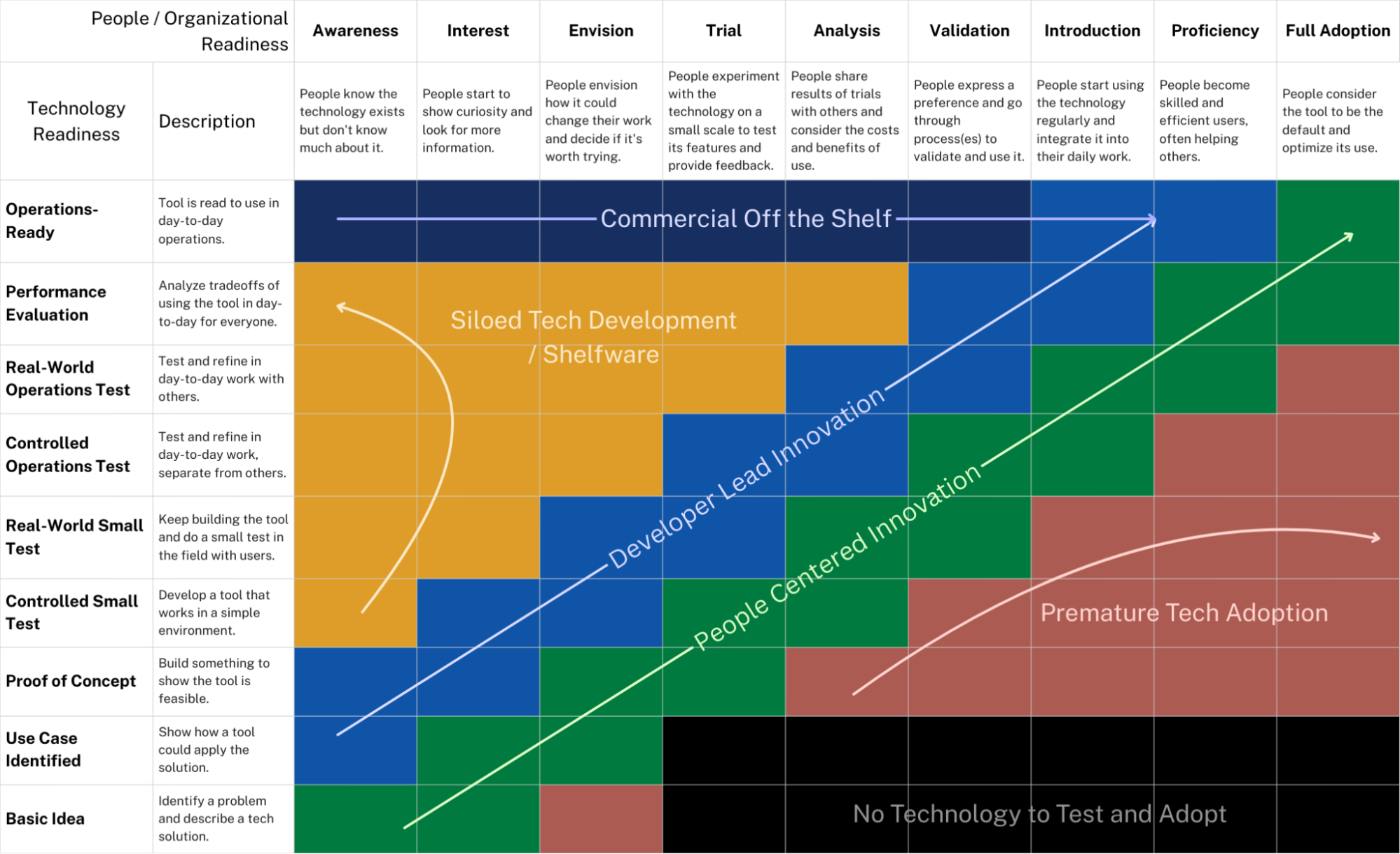

Research on technology adoption has generated many insights into how people get progressively more comfortable and proficient with technology and what makes those efforts successful. However, a single framework has not yet emerged to track this progression. So, as an experiment to fill this gap, we created our own 9 level plain-language scale for People Readiness that looks at the actual journey of those who are meant to benefit from the adoption of technology based on our experience . It starts with an awareness that the technology exists, moving to progressively stronger motivation to first think about and then test the technology, followed by efforts to intentionally introduce, build proficiency, and maximize its use within the organization.

Awareness: People know the technology exists but do not know much about it.

Interest: People start to show curiosity and look for more information.

Envision: People envision how it could change their work and decide if it is worth trying.

Trial: People experiment with the technology on a small scale to test its features and provide feedback.

Analysis: People share results of trials with others and consider the costs and benefits of use.

Validation: People express a preference and go through process(es) to validate and use it.

Introduction: People start using the technology regularly and integrate it into their daily work.

Proficiency: People become skilled and efficient users, often helping others.

Full Adoption: People consider the tool to be the default and optimize its use.

What People Readiness Tells Us

Matrixing People Readiness with Technology Readiness helps visualize the progression (and pitfalls) that many technology developers and adopters face when working together. The most typical paths to technology adoption that we see are Developer Lead Innovation (where a technology developer tries to bring the adopting org along with them) and Commercial-off-the-shelf (COTS) adoption (where a tool that has been built and deployed in some organizations is brought to a new one, for example in a new state government). These models of adoption can work, but often the most successful path to full adoption is a People Centered Innovation model. People Centered Innovation is when the adopting agency takes the lead on developing a clear problem statement, exploring different technological approaches, and then iteratively working with a developer to refine and test the technology while training its workforce to use it. If technology and people's readiness progress at different rates, technology development can easily veer off course becoming siloed from the organizations it's meant to serve or deployed too early losing credibility through poor performance. Looking at technology adoption across these two dimensions can help everyone involved plan the steps needed to get to the desired state and set realistic goals and expectations.

The Matrix allows managers to see these misalignments in real-time. Imagine a team of foresters being handed a cutting-edge LiDAR mapping tool. On the TRL scale, that tool might be an “8” or "9"—mission-proven, ready to go, and used extensively in private forestry. But if the foresters in government agencies are at a "1" or “2” on the People Readiness scale (unaware or skeptical), the innovation will not yield the intended benefits. If your tech is at a “7” (Full-scale Field Test) but the people that will use it are still at “2” (Interest), you likely don't need more developers—you need better communication, training, and "connective-tissue" to bridge the gap.

“Readiness” at an adopting organization often has other dimensions, including maintaining cybersecurity and interoperability with existing systems, that may require changes at the organization to ultimately adopt a new technology. While those benefit from tracking as well, People Readiness gets at the first and most essential piece of technology adoption: the informed decision-making of people to invest their time and energy in understanding and using it.

We Need to Talk… About Authentic Adoption

Having shared language around full and authentic technology adoption is a first step toward making sense of the environmental technology ecosystem. Without it, technology developers, environmental agencies, and their partners will continue to talk past each other and lament missed opportunities. We also know that to truly make this actionable it is best paired with a playbook for improving people readiness that draws on research and learned experience at a variety of agencies. If this is something that resonates with you, drop us a line!